The Problem

The client was a B2B services company receiving 80–120 inbound leads per day from multiple sources: their website, Meta Ads, Upwork, and referrals. Their sales team was manually reviewing each lead — reading the message, checking their company on LinkedIn, and deciding whether to follow up or not.

This manual triage process took up to 4 hours each day, occupied two full-time sales reps, and — critically — caused hot leads to go cold before anyone responded. The business was losing real revenue from slow response times.

- 4-hour average response time (industry average for conversions: under 5 minutes)

- No prioritisation system — hot and cold leads treated identically

- Inconsistent qualification criteria between sales reps

- No automatic CRM record creation — all done manually

The Solution

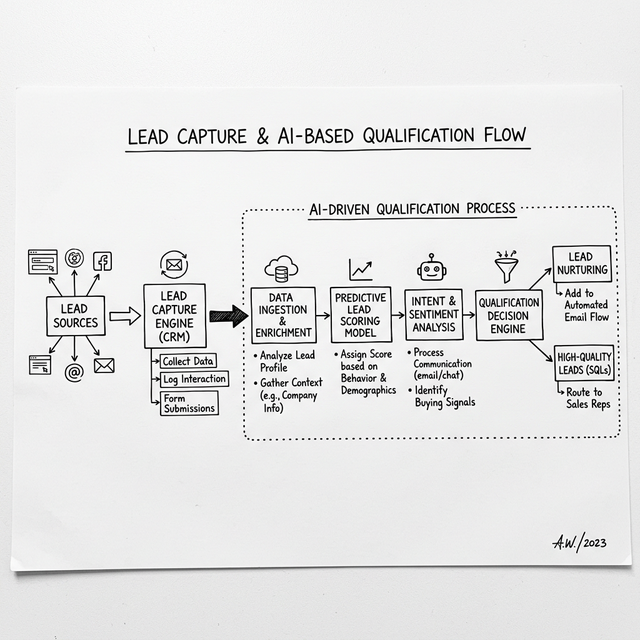

I designed and built a fully automated AI lead qualification pipeline using Make.com as the orchestration layer, OpenAI GPT-4 for intelligent scoring, and HubSpot as the CRM destination.

The system works as follows: Every inbound lead from every source (webhook, form, or API) is captured in real-time. The full lead message is then sent to GPT-4 with a custom scoring prompt I engineered specifically for this client's ideal customer profile.

"Hot leads (score 7+) now receive an automated personalised response within 90 seconds —

before a human even sees the lead."

GPT-4 returns a JSON payload containing: a lead score from 1–10, a confidence indicator, a one-line summary of the lead's key problem, and a recommended action. Make.com then routes each lead through a conditional logic tree based on the score.

Technical Architecture

The Results

Within the first week of deployment, the results were measurable and immediate:

- Lead response time dropped from 4 hours to under 90 seconds for hot leads

- Sales team reclaimed 15+ hours per week previously spent on manual triage

- Lead qualification accuracy benchmarked at 92% (vs. ~70% consistency with manual review)

- Zero leads falling through — 100% of inbound traffic now captured and classified

The client's close rate on hot leads (score 7+) improved by 28% in the first month, primarily due to the response time improvement. The system has been running in production for 9+ months with zero downtime.

Key Learnings

The most important factor in the system's success was prompt engineering. Vague prompts produce inconsistent scores. I iterated through 12 versions of the qualification prompt before settling on the final version — testing against a library of 200 historical leads with known outcomes.

The second key factor was building a human review queue for borderline leads (scores 5–6). Full automation for clear hot and cold leads, with human judgment preserved for the hard cases.